Prompt Engineering

Enhancing The Capabilities of Large Language Models

Ensuring the Output of AI Tools Matches Your Requirements

Many problems can be instantly solved by powerful AI language models like OpenAI's chatGPT and Google's Bard right out of the box. Sometimes, however, they fail to follow the exact format and data that you need them to for your business needs.

That's where prompt engineering comes in. By developing strict output format rules, 'training' the model with pre-prepared examples in the prompt and extensive testing, a consistent reliable output can often be achieved.

Working With A Prompt Engineer

While chatGPT prompt engineering is by far the most popular request from clients, your AI prompt engineer will be platform agnostic. They will take the time to understand your needs and which platforms will work best for the scope and scale of the business need you're trying to solve - including helping you to host your own deployment of an open source model or simply using a major provider like Google Vertex or OpenAI.

Sometimes, of course, for lower-volume tasks, simply improving the prompts and strategies for giving data and clues to chatGPT will be sufficient and our prompt engineers will expertly craft that for you and provide the copy you need to get better results from those tools without any additional set up needed. In complex fields such as legal the accuracy of prompts is vital.

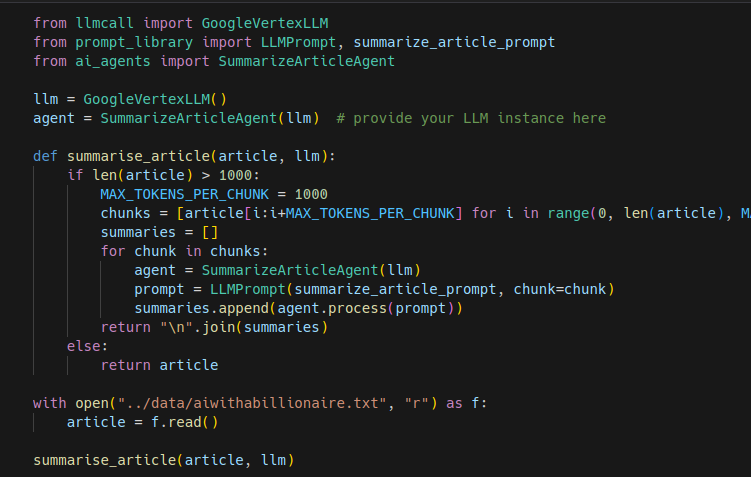

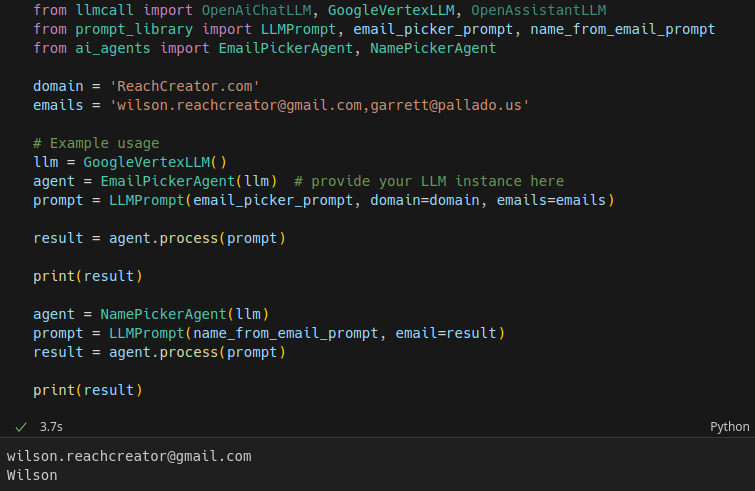

Langchain And Other Complex Prompt Engineering

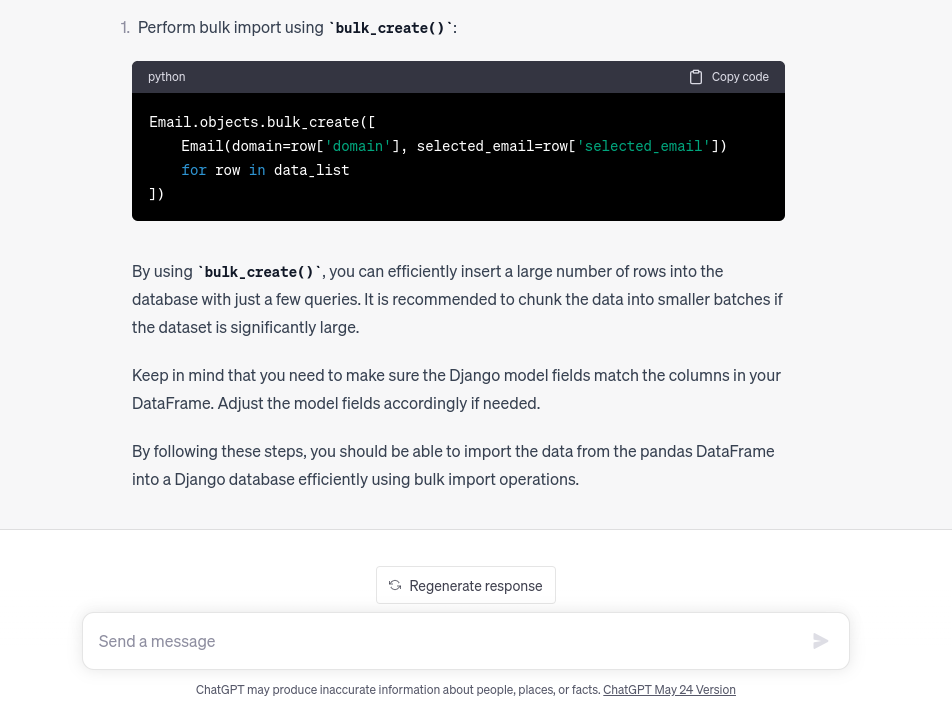

Sometimes the most powerful results from LLMs (large language models like Bard and ChatGPT) comes from prompt engineering that mixes a series of chain-of-thought prompts with access to other tools (the web, databases, and other APIs that provide data you need).

When that's required our development team will work together with the prompt engineer to put together a fully automated workflow that pieces all those separate prompts, and requests for information or data processing together into one neat package for your business to use whether it's for AI sales automation or any other task you're struggling to achieve.

As always our team work with almost any platform from langchain prompt engineering to working with chatbots using Botpress and integrating with Zapier. The team is also familiar with other cutting edge models such as Claude.